Chapter 24. Virtualization

如果发现翻译错误,请直接 发起PR修改。

目录

24.1. Synopsis

Virtualization software allows multiple operating systems to run simultaneously on the same computer. Such software systems for PCs often involve a host operating system which runs the virtualization software and supports any number of guest operating systems.

After reading this chapter, you will know:

-

The difference between a host operating system and a guest operating system.

-

How to install FreeBSD on the following virtualization platforms:

-

Parallels Desktop(Apple® macOS®)

-

VMware Fusion(Apple® macOS®)

-

VirtualBox™(Microsoft® Windows®, Intel®-based Apple® macOS®, Linux)

-

bhyve(FreeBSD)

-

-

How to tune a FreeBSD system for best performance under virtualization.

Before reading this chapter, you should:

-

Understand the basics of UNIX® and FreeBSD.

-

Know how to install FreeBSD.

-

Know how to set up a network connection.

-

Know how to install additional third-party software.

24.2. FreeBSD as a Guest on Parallels Desktop for macOS®

Parallels Desktop for Mac® is a commercial software product available for Apple® Mac® computers running macOS® 10.14.6 or higher. FreeBSD is a fully supported guest operating system. Once Parallels has been installed on macOS®, the user must configure a virtual machine and then install the desired guest operating system.

24.2.1. Installing FreeBSD on Parallels Desktop on Mac®

The first step in installing FreeBSD on Parallels is to create a new virtual machine for installing FreeBSD.

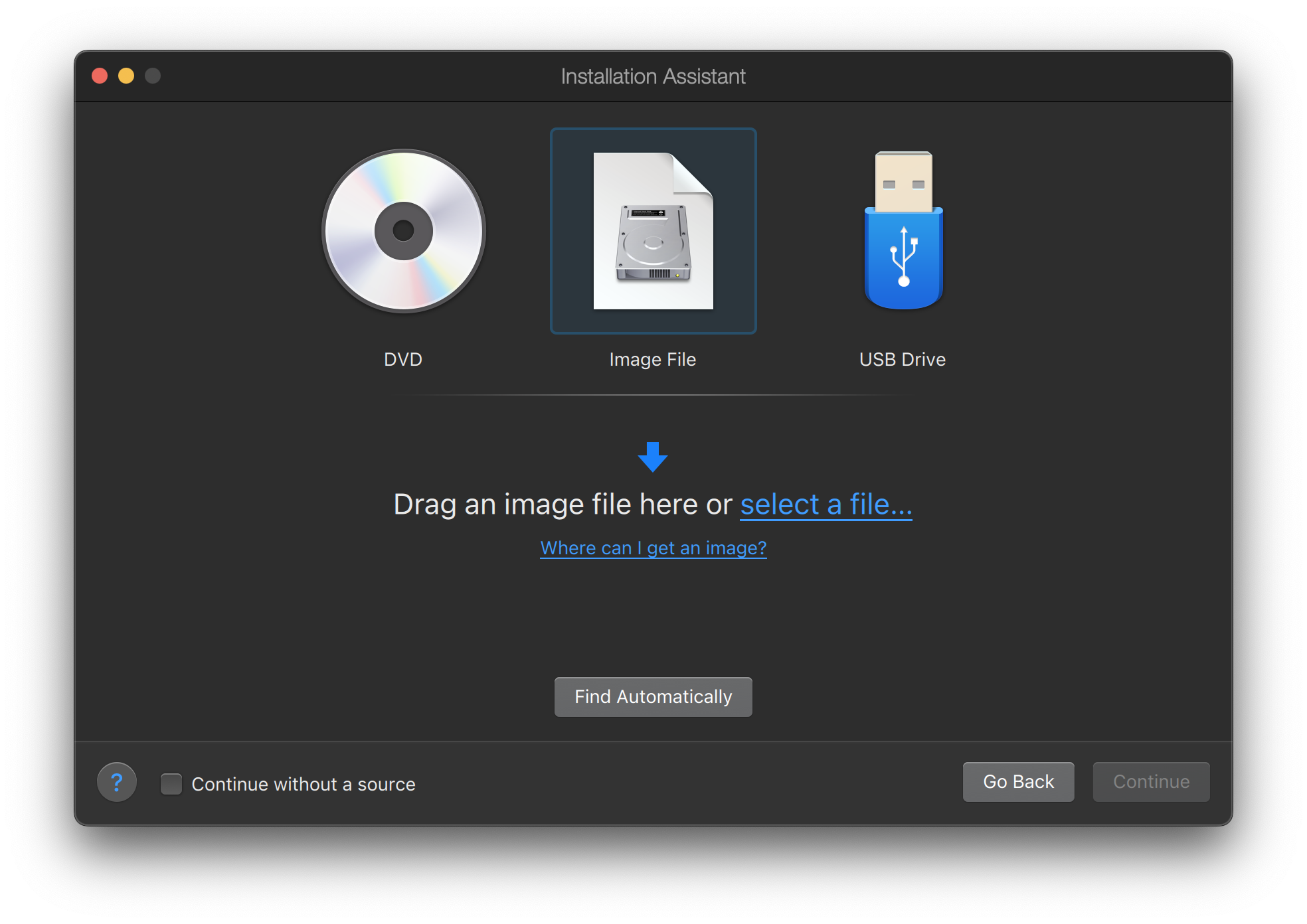

Choose Install Windows or another OS from a DVD or image file and proceed.

Select the FreeBSD image file.

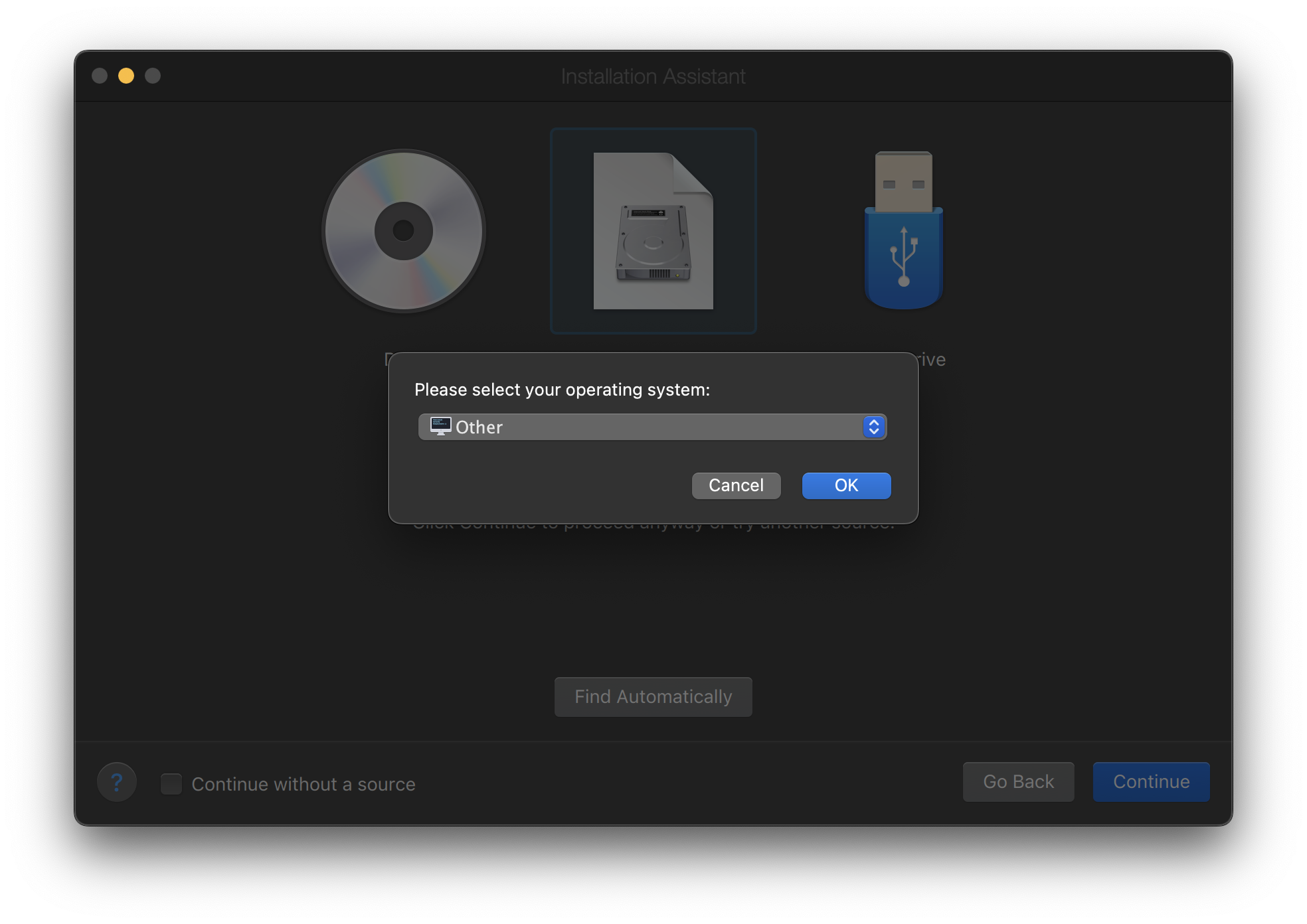

Choose Other as operating system.

|

Choosing FreeBSD will cause boot error on startup. |

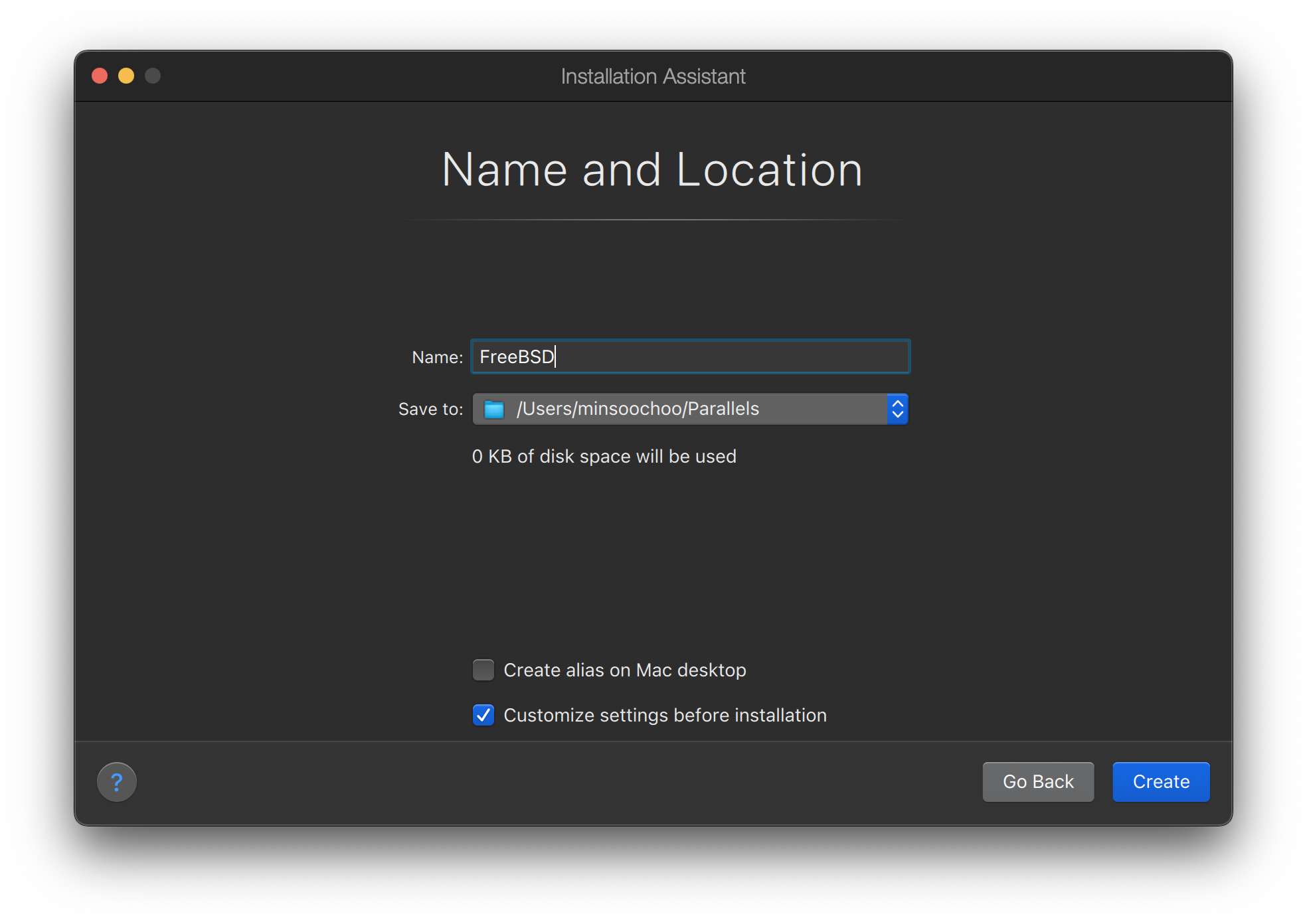

Name the virtual machine and check Customize settings before installation

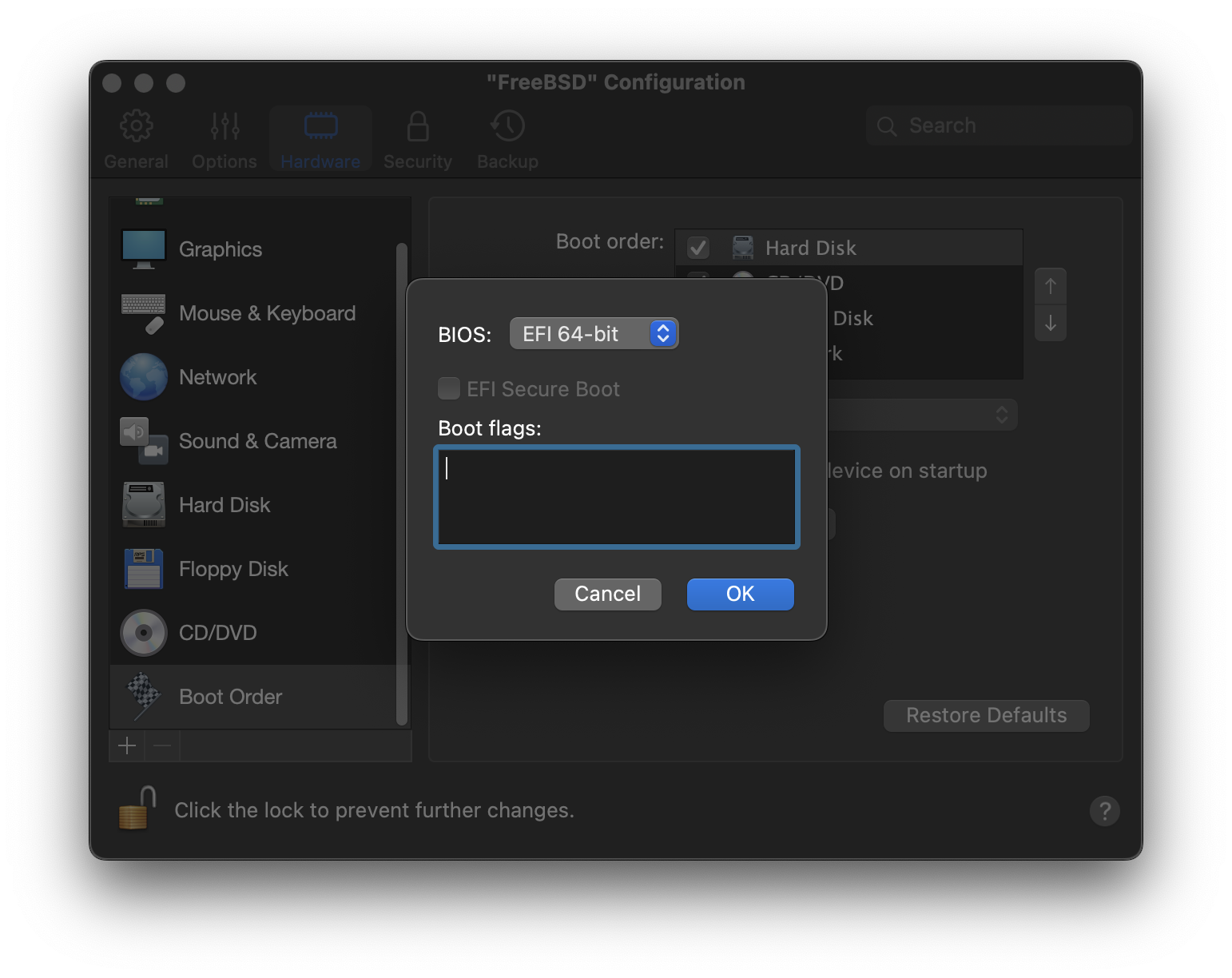

When the configuration window pops up, go to Hardware tab, choose Boot order, and click Advanced. Then, choose EFI 64-bit as BIOS.

Click OK, close the configuration window, and click Continue.

The virtual machine will automatically boot. Install FreeBSD following the general steps.

24.2.2. Configuring FreeBSD on Parallels

After FreeBSD has been successfully installed on macOS® X with Parallels, there are a number of configuration steps that can be taken to optimize the system for virtualized operation.

-

Set Boot Loader Variables

The most important step is to reduce the

kern.hztunable to reduce the CPU utilization of FreeBSD under the Parallels environment. This is accomplished by adding the following line to /boot/loader.conf:kern.hz=100

Without this setting, an idle FreeBSD Parallels guest will use roughly 15% of the CPU of a single processor iMac®. After this change the usage will be closer to 5%.

-

Create a New Kernel Configuration File

-

Configure Networking

The most basic networking setup uses DHCP to connect the virtual machine to the same local area network as the host Mac®. This can be accomplished by adding

ifconfig_ed0="DHCP"to /etc/rc.conf. More advanced networking setups are described in Advanced Networking.

24.3. FreeBSD as a Guest on VMware Fusion for macOS®

VMware Fusion for Mac® is a commercial software product available for Apple® Mac® computers running macOS® 12 or higher. FreeBSD is a fully supported guest operating system. Once VMware Fusion has been installed on macOS®, the user can configure a virtual machine and then install the desired guest operating system.

24.3.1. Installing FreeBSD on VMware Fusion

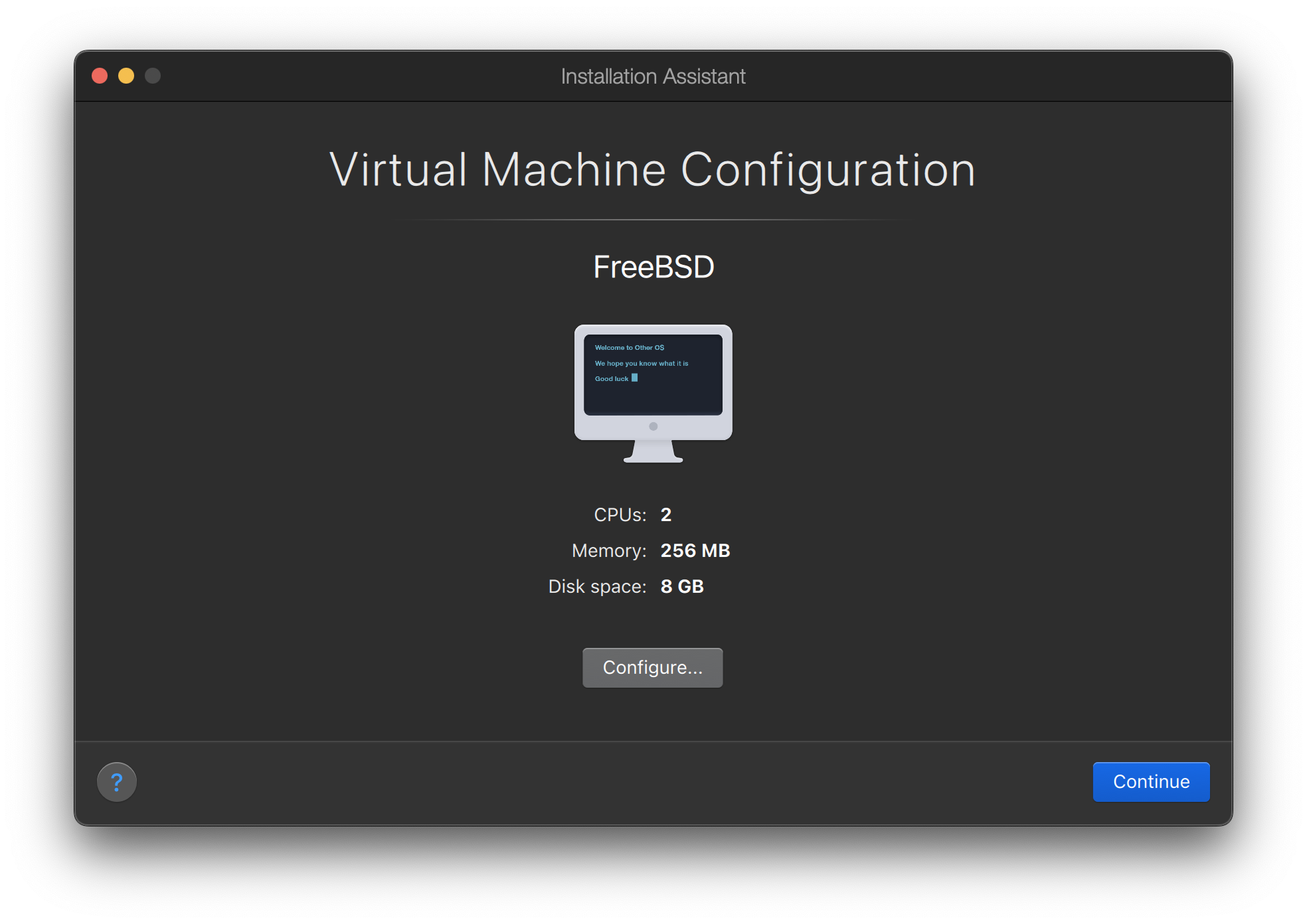

The first step is to start VMware Fusion which will load the Virtual Machine Library. Click to create the virtual machine:

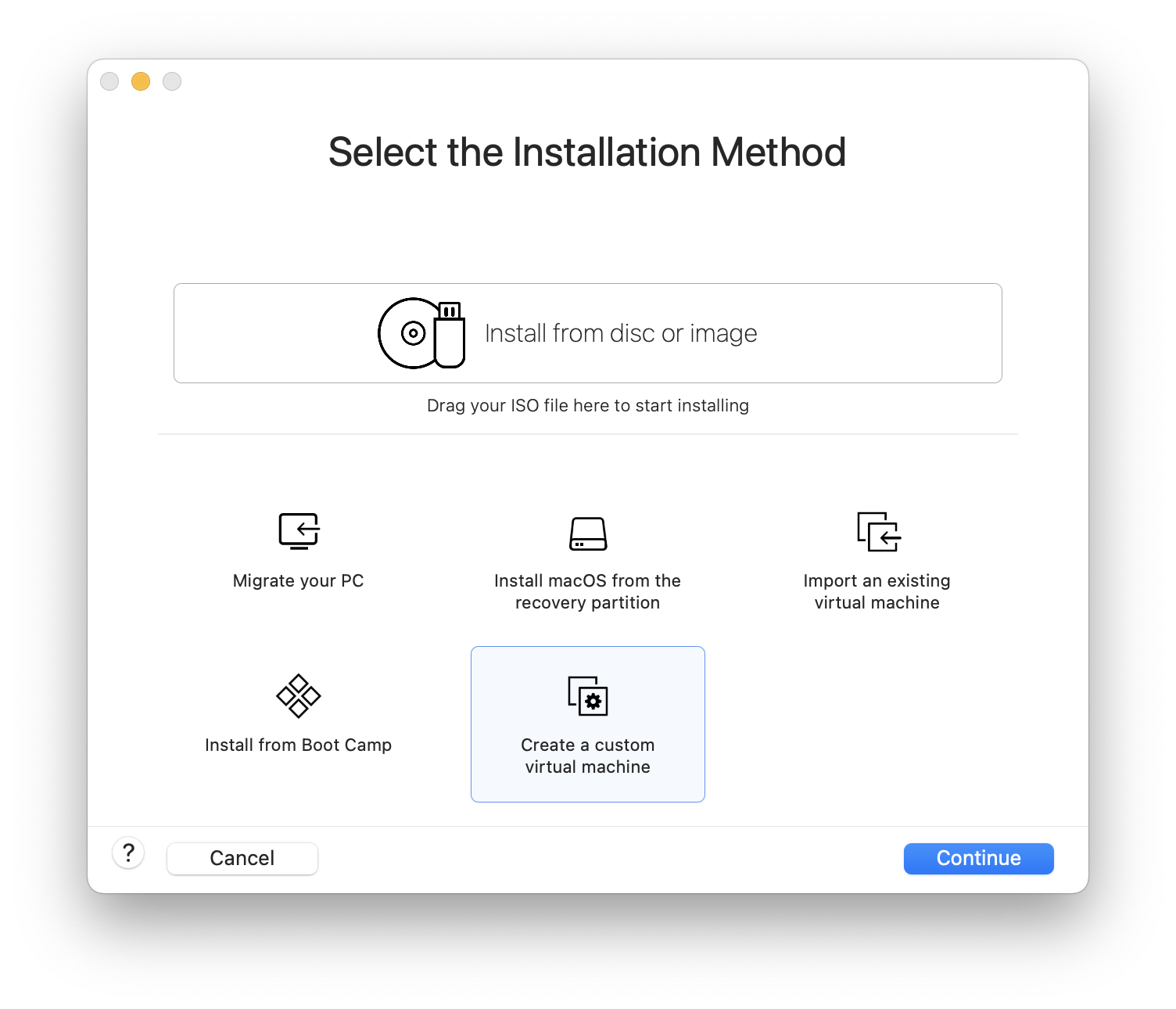

This will load the New Virtual Machine Assistant. Choose and click to proceed:

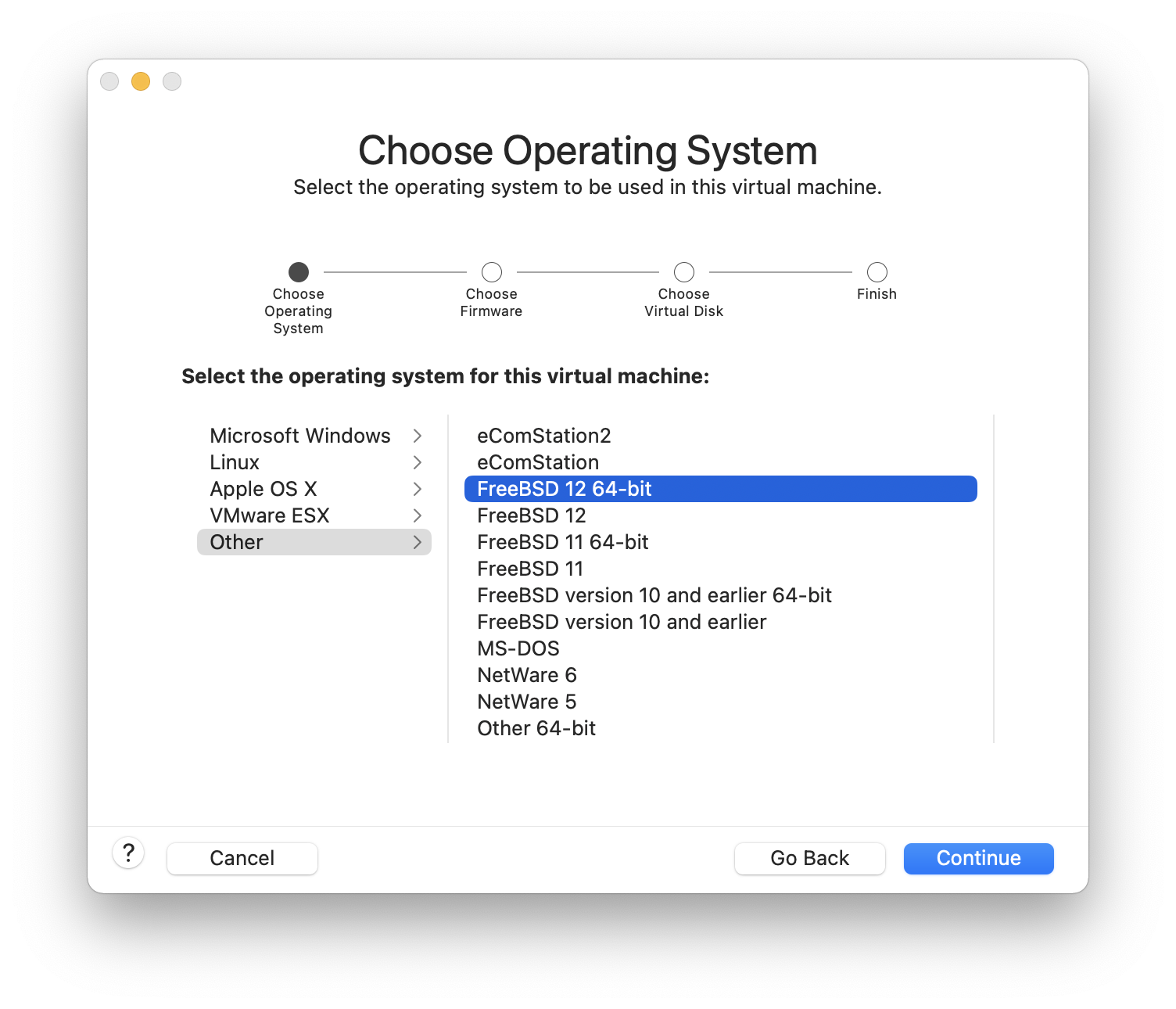

Select as the and either or , as the Version when prompted:

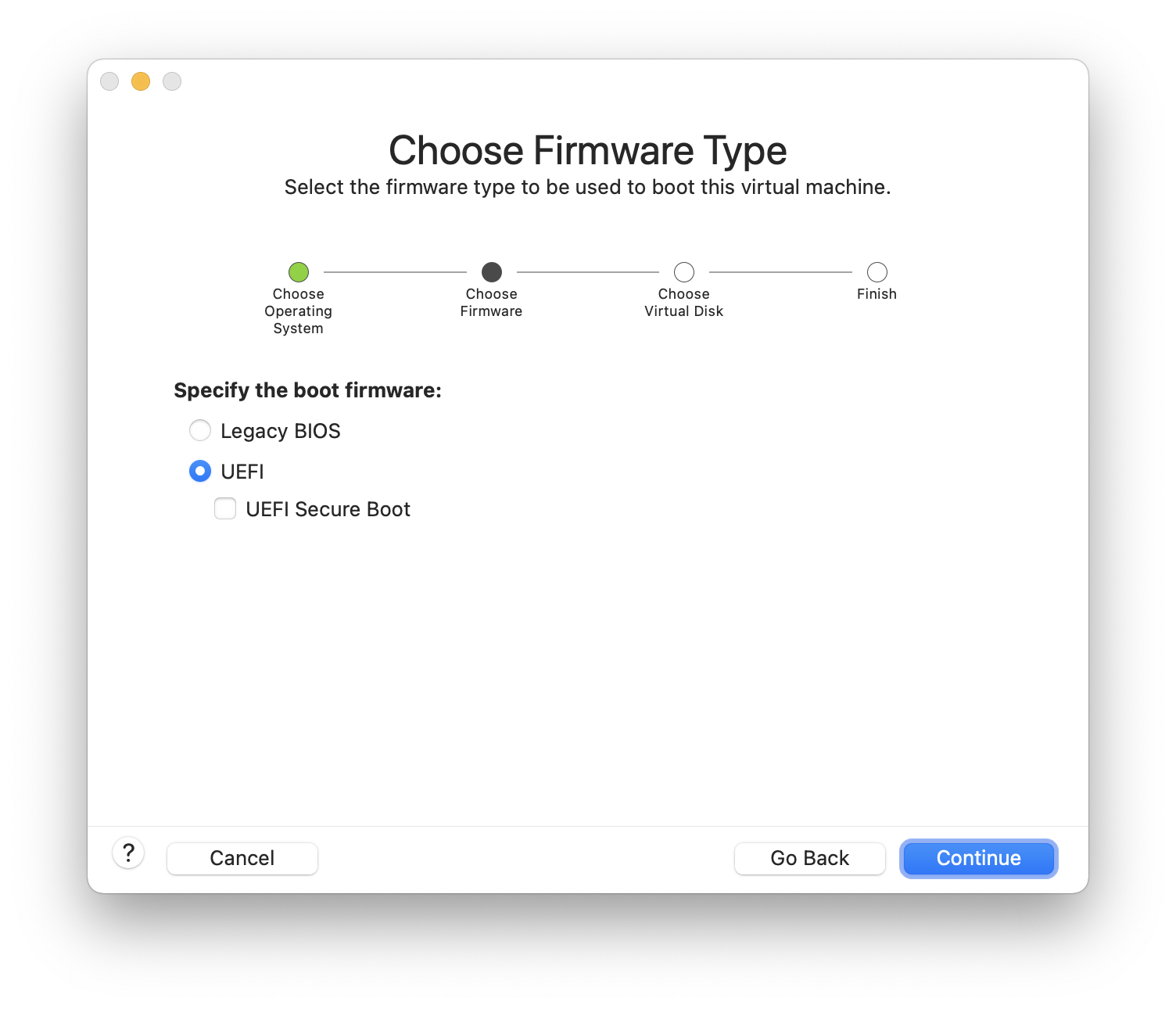

Choose the firmware(UEFI is recommended):

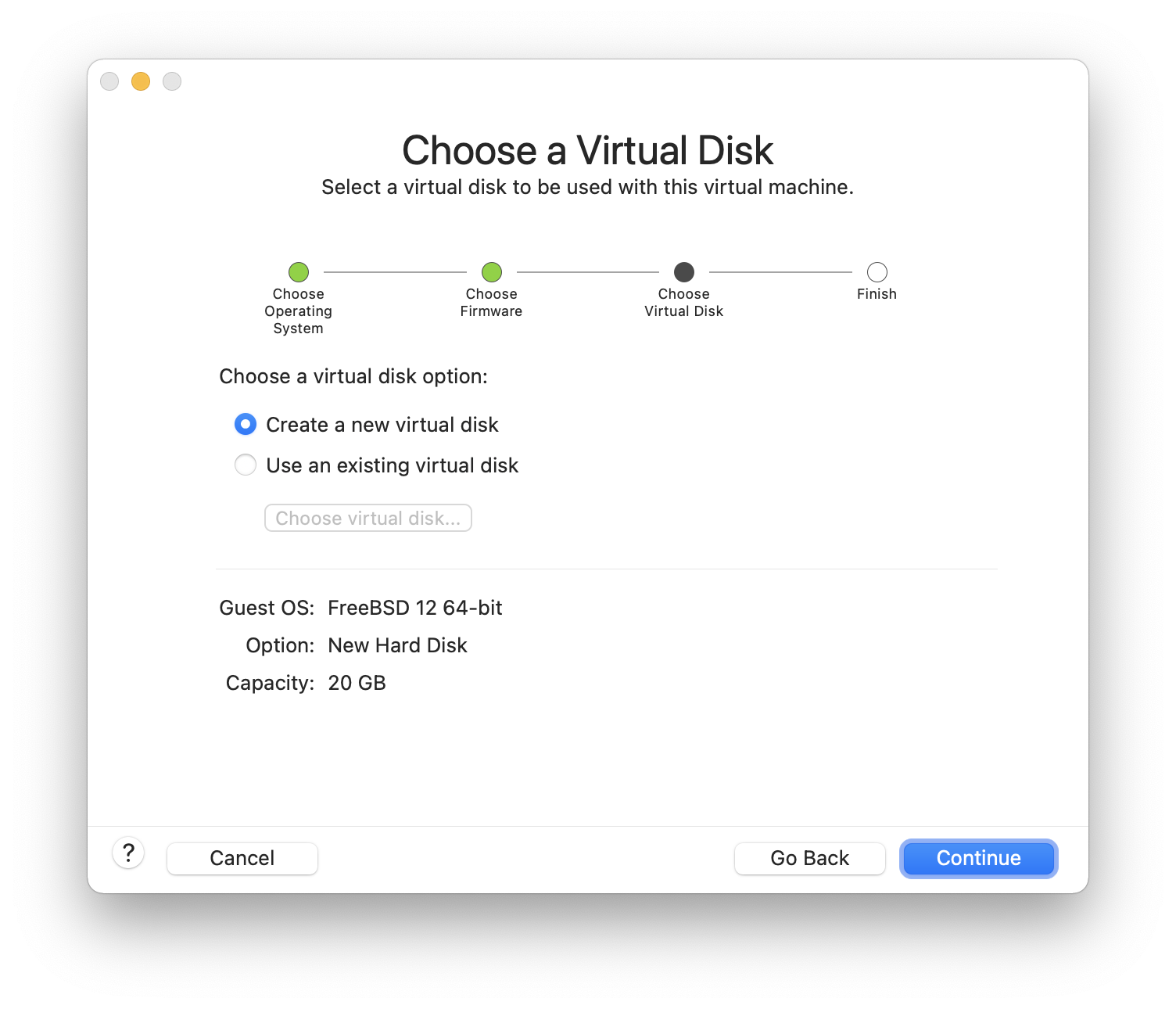

Choose and click :

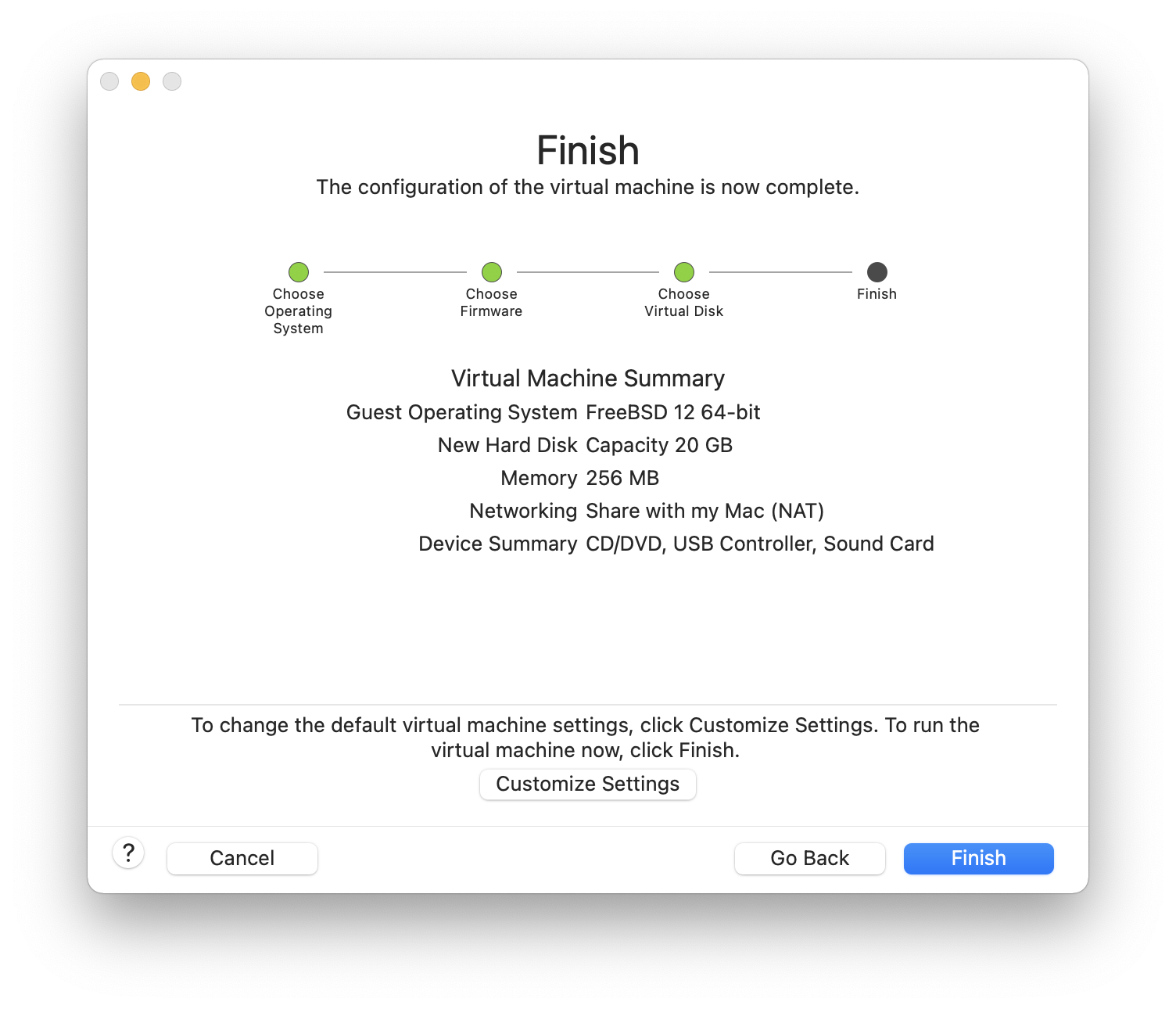

Check the configuration and click :

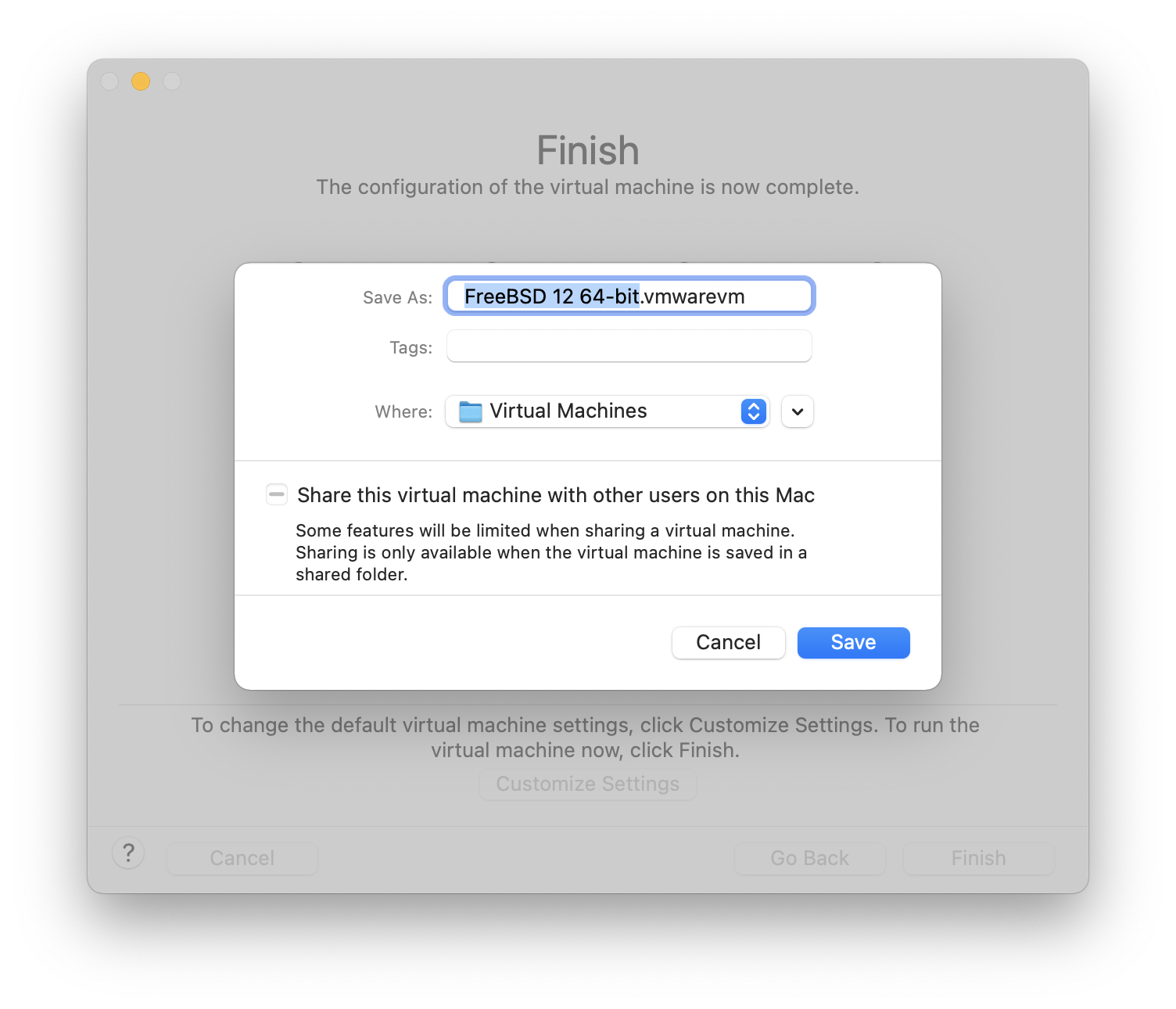

Choose the name of the virtual machine and the directory where it should be saved:

Press command+E to open virtual machine settings and click :

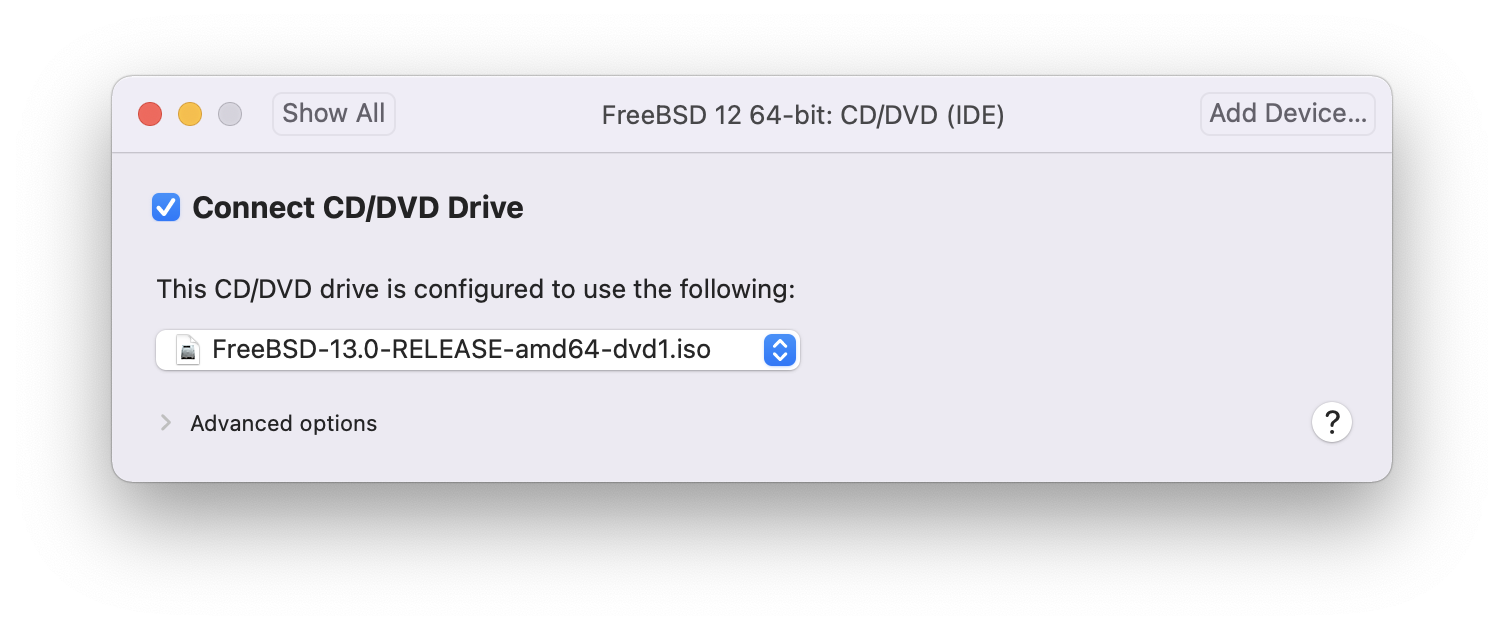

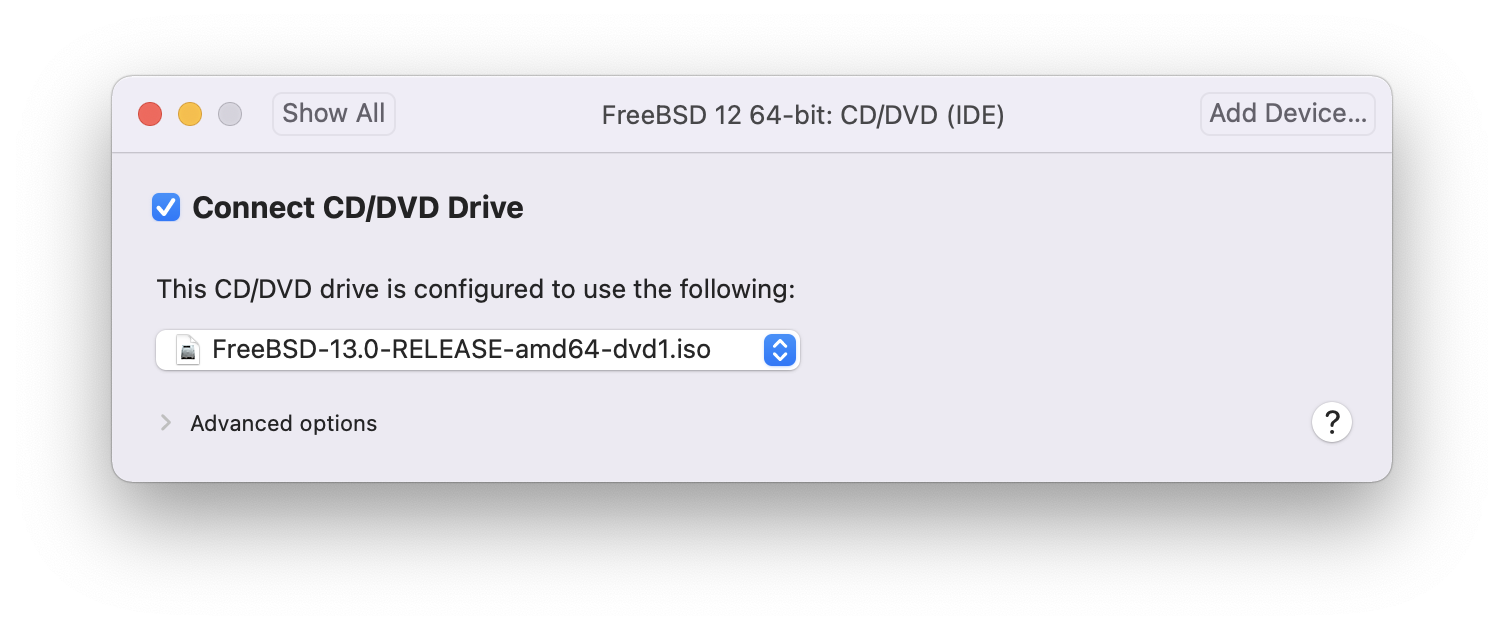

Choose FreeBSD ISO image or from a CD/DVD:

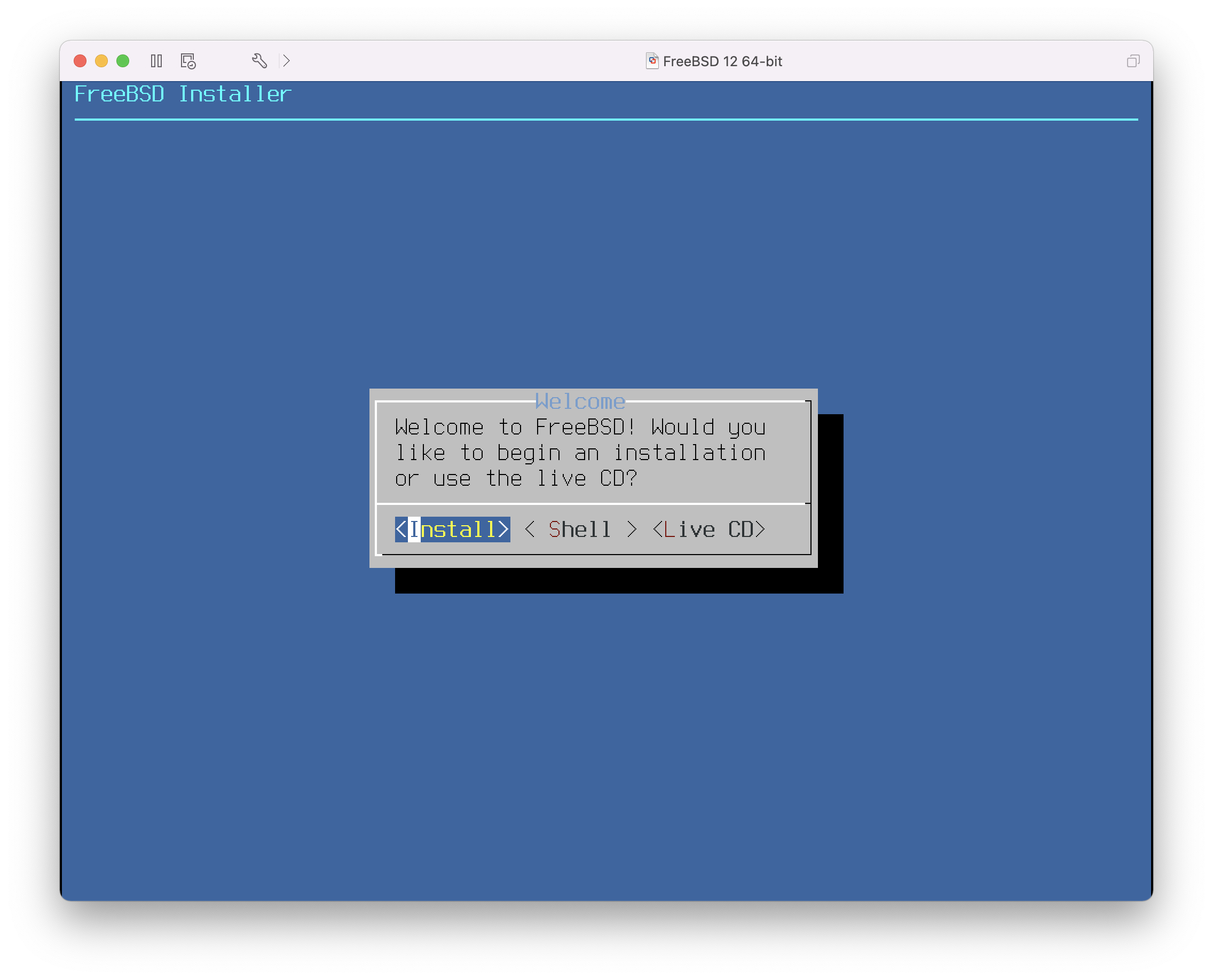

Start the virtual machine:

Install FreeBSD as usual:

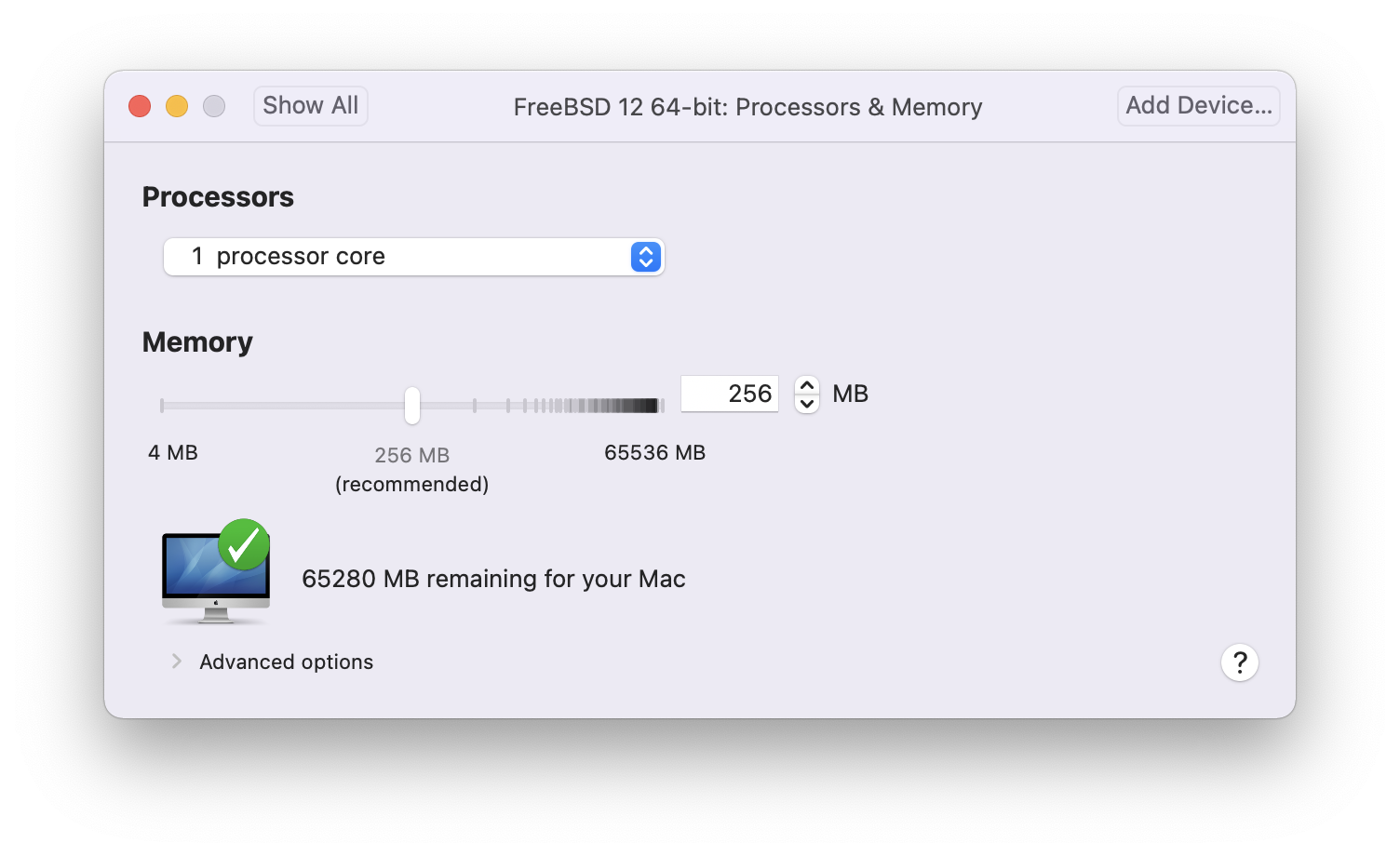

Once the install is complete, the settings of the virtual machine can be modified, such as memory usage and the number of CPUs the virtual machine will have access to:

|

The System Hardware settings of the virtual machine cannot be modified while the virtual machine is running. |

The status of the CD-ROM device. Normally the CD/DVD/ISO is disconnected from the virtual machine when it is no longer needed.

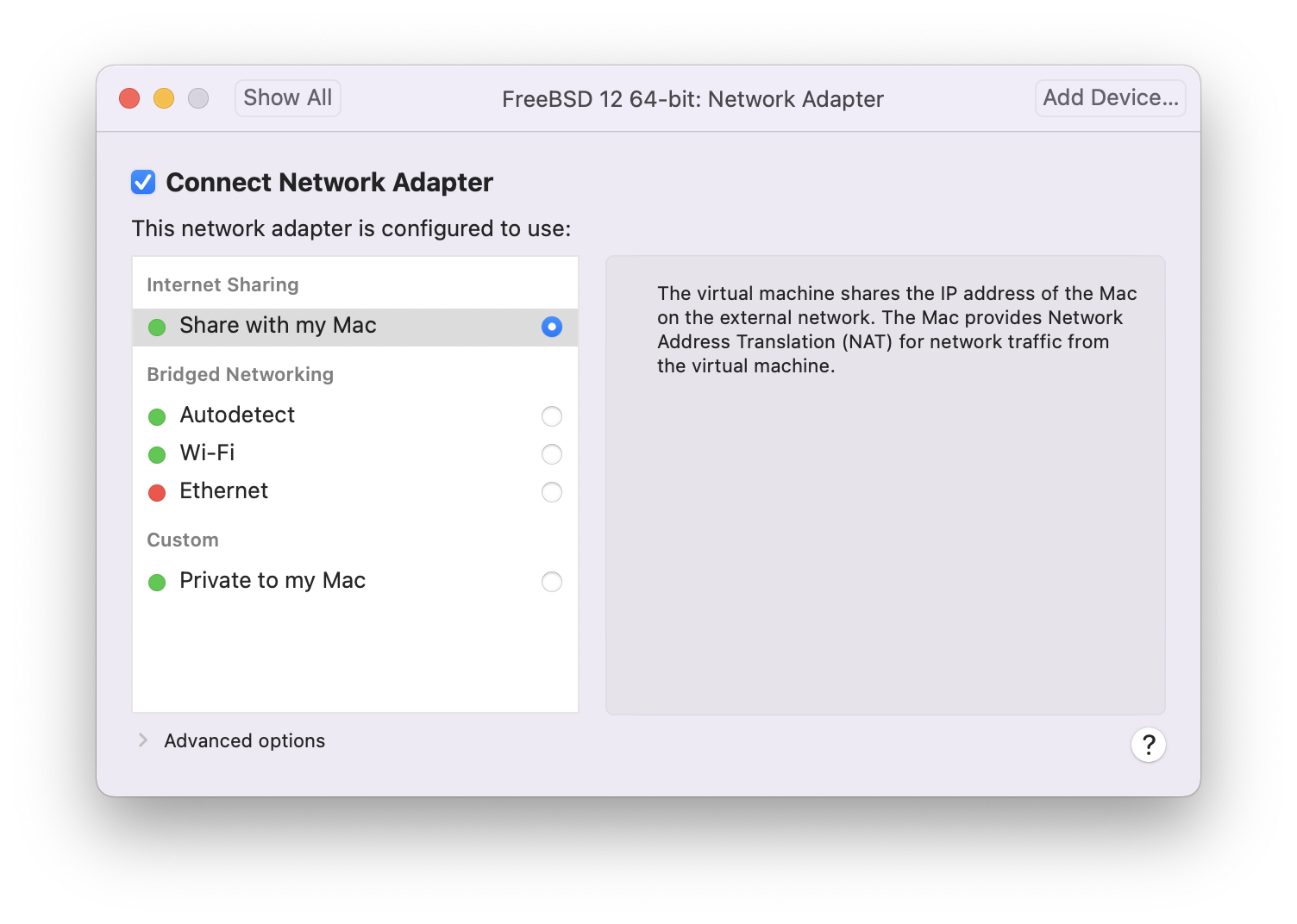

The last thing to change is how the virtual machine will connect to the network. To allow connections to the virtual machine from other machines besides the host, choose . Otherwise, is preferred so that the virtual machine can have access to the Internet, but the network cannot access the virtual machine.

After modifying the settings, boot the newly installed FreeBSD virtual machine.

24.3.2. Configuring FreeBSD on VMware Fusion

After FreeBSD has been successfully installed on macOS® X with VMware Fusion, there are a number of configuration steps that can be taken to optimize the system for virtualized operation.

-

Set Boot Loader Variables

The most important step is to reduce the

kern.hztunable to reduce the CPU utilization of FreeBSD under the VMware Fusion environment. This is accomplished by adding the following line to /boot/loader.conf:kern.hz=100

Without this setting, an idle FreeBSD VMware Fusion guest will use roughly 15% of the CPU of a single processor iMac®. After this change, the usage will be closer to 5%.

-

Create a New Kernel Configuration File

-

Configure Networking

The most basic networking setup uses DHCP to connect the virtual machine to the same local area network as the host Mac®. This can be accomplished by adding

ifconfig_em0="DHCP"to /etc/rc.conf. More advanced networking setups are described in Advanced Networking. -

Install drivers and open-vm-tools

To run FreeBSD smoothly on VMWare, drivers should be installed:

# pkg install xf86-video-vmware xf86-input-vmmouse open-vm-tools

24.4. FreeBSD as a Guest on VirtualBox™

FreeBSD works well as a guest in VirtualBox™. The virtualization software is available for most common operating systems, including FreeBSD itself.

The VirtualBox™ guest additions provide support for:

-

Clipboard sharing.

-

Mouse pointer integration.

-

Host time synchronization.

-

Window scaling.

-

Seamless mode.

|

These commands are run in the FreeBSD guest. |

First, install the emulators/virtualbox-ose-additions package or port in the FreeBSD guest. This will install the port:

# cd /usr/ports/emulators/virtualbox-ose-additions && make install cleanAdd these lines to /etc/rc.conf:

vboxguest_enable="YES" vboxservice_enable="YES"

If ntpd(8) or ntpdate(8) is used, disable host time synchronization:

vboxservice_flags="--disable-timesync"

Xorg will automatically recognize the vboxvideo driver. It can also be manually entered in /etc/X11/xorg.conf:

Section "Device" Identifier "Card0" Driver "vboxvideo" VendorName "InnoTek Systemberatung GmbH" BoardName "VirtualBox Graphics Adapter" EndSection

To use the vboxmouse driver, adjust the mouse section in /etc/X11/xorg.conf:

Section "InputDevice" Identifier "Mouse0" Driver "vboxmouse" EndSection

Shared folders for file transfers between host and VM are accessible by mounting them using mount_vboxvfs. A shared folder can be created on the host using the VirtualBox GUI or via vboxmanage. For example, to create a shared folder called myshare under /mnt/bsdboxshare for the VM named BSDBox, run:

# vboxmanage sharedfolder add 'BSDBox' --name myshare --hostpath /mnt/bsdboxshareNote that the shared folder name must not contain spaces. Mount the shared folder from within the guest system like this:

# mount_vboxvfs -w myshare /mnt24.5. FreeBSD as a Host with VirtualBox™

VirtualBox™ is an actively developed, complete virtualization package, that is available for most operating systems including Windows®, macOS®, Linux® and FreeBSD. It is equally capable of running Windows® or UNIX®-like guests. It is released as open source software, but with closed-source components available in a separate extension pack. These components include support for USB 2.0 devices. More information may be found on the Downloads page of the VirtualBox™ wiki. Currently, these extensions are not available for FreeBSD.

24.5.1. Installing VirtualBox™

VirtualBox™ is available as a FreeBSD package or port in emulators/virtualbox-ose. The port can be installed using these commands:

# cd /usr/ports/emulators/virtualbox-ose

# make install cleanOne useful option in the port’s configuration menu is the GuestAdditions suite of programs. These provide a number of useful features in guest operating systems, like mouse pointer integration (allowing the mouse to be shared between host and guest without the need to press a special keyboard shortcut to switch) and faster video rendering, especially in Windows® guests. The guest additions are available in the Devices menu, after the installation of the guest is finished.

A few configuration changes are needed before VirtualBox™ is started for the first time. The port installs a kernel module in /boot/modules which must be loaded into the running kernel:

# kldload vboxdrvTo ensure the module is always loaded after a reboot, add this line to /boot/loader.conf:

vboxdrv_load="YES"

To use the kernel modules that allow bridged or host-only networking, add this line to /etc/rc.conf and reboot the computer:

vboxnet_enable="YES"

The vboxusers group is created during installation of VirtualBox™. All users that need access to VirtualBox™ will have to be added as members of this group. pw can be used to add new members:

# pw groupmod vboxusers -m yourusernameThe default permissions for /dev/vboxnetctl are restrictive and need to be changed for bridged networking:

# chown root:vboxusers /dev/vboxnetctl

# chmod 0660 /dev/vboxnetctlTo make this permissions change permanent, add these lines to /etc/devfs.conf:

own vboxnetctl root:vboxusers perm vboxnetctl 0660

To launch VirtualBox™, type from an Xorg session:

% VirtualBoxFor more information on configuring and using VirtualBox™, refer to the official website. For FreeBSD-specific information and troubleshooting instructions, refer to the relevant page in the FreeBSD wiki.

24.5.2. VirtualBox™ USB Support

VirtualBox™ can be configured to pass USB devices through to the guest operating system. The host controller of the OSE version is limited to emulating USB 1.1 devices until the extension pack supporting USB 2.0 and 3.0 devices becomes available on FreeBSD.

For VirtualBox™ to be aware of USB devices attached to the machine, the user needs to be a member of the operator group.

# pw groupmod operator -m yourusernameThen, add the following to /etc/devfs.rules, or create this file if it does not exist yet:

[system=10] add path 'usb/*' mode 0660 group operator

To load these new rules, add the following to /etc/rc.conf:

devfs_system_ruleset="system"

Then, restart devfs:

# service devfs restartRestart the login session and VirtualBox™ for these changes to take effect, and create USB filters as necessary.

24.5.3. VirtualBox™ Host DVD/CD Access

Access to the host DVD/CD drives from guests is achieved through the sharing of the physical drives. Within VirtualBox™, this is set up from the Storage window in the Settings of the virtual machine. If needed, create an empty IDECD/DVD device first. Then choose the Host Drive from the popup menu for the virtual CD/DVD drive selection. A checkbox labeled Passthrough will appear. This allows the virtual machine to use the hardware directly. For example, audio CDs or the burner will only function if this option is selected.

In order for users to be able to use VirtualBox™DVD/CD functions, they need access to /dev/xpt0, /dev/cdN, and /dev/passN. This is usually achieved by making the user a member of operator. Permissions to these devices have to be corrected by adding these lines to /etc/devfs.conf:

perm cd* 0660 perm xpt0 0660 perm pass* 0660

# service devfs restart24.6. FreeBSD as a Host with bhyve

The bhyve BSD-licensed hypervisor became part of the base system with FreeBSD 10.0-RELEASE. This hypervisor supports a number of guests, including FreeBSD, OpenBSD, and many Linux® distributions. By default, bhyve provides access to serial console and does not emulate a graphical console. Virtualization offload features of newer CPUs are used to avoid the legacy methods of translating instructions and manually managing memory mappings.

The bhyve design requires a processor that supports Intel® Extended Page Tables (EPT) or AMD® Rapid Virtualization Indexing (RVI) or Nested Page Tables (NPT). Hosting Linux® guests or FreeBSD guests with more than one vCPU requires VMX unrestricted mode support (UG). Most newer processors, specifically the Intel® Core™ i3/i5/i7 and Intel® Xeon™ E3/E5/E7, support these features. UG support was introduced with Intel’s Westmere micro-architecture. For a complete list of Intel® processors that support EPT, refer to https://ark.intel.com/content/www/us/en/ark/search/featurefilter.html?productType=873&0_ExtendedPageTables=True. RVI is found on the third generation and later of the AMD Opteron™ (Barcelona) processors. The easiest way to tell if a processor supports bhyve is to run dmesg or look in /var/run/dmesg.boot for the POPCNT processor feature flag on the Features2 line for AMD® processors or EPT and UG on the VT-x line for Intel® processors.

24.6.1. Preparing the Host

The first step to creating a virtual machine in bhyve is configuring the host system. First, load the bhyve kernel module:

# kldload vmmThen, create a tap interface for the network device in the virtual machine to attach to. In order for the network device to participate in the network, also create a bridge interface containing the tap interface and the physical interface as members. In this example, the physical interface is igb0:

# ifconfig tap0 create

# sysctl net.link.tap.up_on_open=1

net.link.tap.up_on_open: 0 -> 1

# ifconfig bridge0 create

# ifconfig bridge0 addm igb0 addm tap0

# ifconfig bridge0 up24.6.2. Creating a FreeBSD Guest

Create a file to use as the virtual disk for the guest machine. Specify the size and name of the virtual disk:

# truncate -s 16G guest.imgDownload an installation image of FreeBSD to install:

# fetch https://download.freebsd.org/releases/ISO-IMAGES/13.1/FreeBSD-13.1-RELEASE-amd64-bootonly.iso

FreeBSD-13.1-RELEASE-amd64-bootonly.iso 366 MB 16 MBps 22sFreeBSD comes with an example script for running a virtual machine in bhyve. The script will start the virtual machine and run it in a loop, so it will automatically restart if it crashes. The script takes a number of options to control the configuration of the machine: -c controls the number of virtual CPUs, -m limits the amount of memory available to the guest, -t defines which tap device to use, -d indicates which disk image to use, -i tells bhyve to boot from the CD image instead of the disk, and -I defines which CD image to use. The last parameter is the name of the virtual machine, used to track the running machines. This example starts the virtual machine in installation mode:

# sh /usr/share/examples/bhyve/vmrun.sh -c 1 -m 1024M -t tap0 -d guest.img -i -I FreeBSD-13.1-RELEASE-amd64-bootonly.iso guestnameThe virtual machine will boot and start the installer. After installing a system in the virtual machine, when the system asks about dropping in to a shell at the end of the installation, choose Yes.

Reboot the virtual machine. While rebooting the virtual machine causes bhyve to exit, the vmrun.sh script runs bhyve in a loop and will automatically restart it. When this happens, choose the reboot option from the boot loader menu in order to escape the loop. Now the guest can be started from the virtual disk:

# sh /usr/share/examples/bhyve/vmrun.sh -c 4 -m 1024M -t tap0 -d guest.img guestname24.6.3. Creating a Linux® Guest

In order to boot operating systems other than FreeBSD, the sysutils/grub2-bhyve port must be first installed.

Next, create a file to use as the virtual disk for the guest machine:

# truncate -s 16G linux.imgStarting a virtual machine with bhyve is a two step process. First a kernel must be loaded, then the guest can be started. The Linux® kernel is loaded with sysutils/grub2-bhyve. Create a device.map that grub will use to map the virtual devices to the files on the host system:

(hd0) ./linux.img (cd0) ./somelinux.iso

Use sysutils/grub2-bhyve to load the Linux® kernel from the ISO image:

# grub-bhyve -m device.map -r cd0 -M 1024M linuxguestThis will start grub. If the installation CD contains a grub.cfg, a menu will be displayed. If not, the vmlinuz and initrd files must be located and loaded manually:

grub> ls

(hd0) (cd0) (cd0,msdos1) (host)

grub> ls (cd0)/isolinux

boot.cat boot.msg grub.conf initrd.img isolinux.bin isolinux.cfg memtest

splash.jpg TRANS.TBL vesamenu.c32 vmlinuz

grub> linux (cd0)/isolinux/vmlinuz

grub> initrd (cd0)/isolinux/initrd.img

grub> bootNow that the Linux® kernel is loaded, the guest can be started:

# bhyve -A -H -P -s 0:0,hostbridge -s 1:0,lpc -s 2:0,virtio-net,tap0 -s 3:0,virtio-blk,./linux.img \

-s 4:0,ahci-cd,./somelinux.iso -l com1,stdio -c 4 -m 1024M linuxguestThe system will boot and start the installer. After installing a system in the virtual machine, reboot the virtual machine. This will cause bhyve to exit. The instance of the virtual machine needs to be destroyed before it can be started again:

# bhyvectl --destroy --vm=linuxguestNow the guest can be started directly from the virtual disk. Load the kernel:

# grub-bhyve -m device.map -r hd0,msdos1 -M 1024M linuxguest

grub> ls

(hd0) (hd0,msdos2) (hd0,msdos1) (cd0) (cd0,msdos1) (host)

(lvm/VolGroup-lv_swap) (lvm/VolGroup-lv_root)

grub> ls (hd0,msdos1)/

lost+found/ grub/ efi/ System.map-2.6.32-431.el6.x86_64 config-2.6.32-431.el6.x

86_64 symvers-2.6.32-431.el6.x86_64.gz vmlinuz-2.6.32-431.el6.x86_64

initramfs-2.6.32-431.el6.x86_64.img

grub> linux (hd0,msdos1)/vmlinuz-2.6.32-431.el6.x86_64 root=/dev/mapper/VolGroup-lv_root

grub> initrd (hd0,msdos1)/initramfs-2.6.32-431.el6.x86_64.img

grub> bootBoot the virtual machine:

# bhyve -A -H -P -s 0:0,hostbridge -s 1:0,lpc -s 2:0,virtio-net,tap0 \

-s 3:0,virtio-blk,./linux.img -l com1,stdio -c 4 -m 1024M linuxguestLinux® will now boot in the virtual machine and eventually present you with the login prompt. Login and use the virtual machine. When you are finished, reboot the virtual machine to exit bhyve. Destroy the virtual machine instance:

# bhyvectl --destroy --vm=linuxguest24.6.4. Booting bhyve Virtual Machines with UEFI Firmware

In addition to bhyveload and grub-bhyve, the bhyve hypervisor can also boot virtual machines using the UEFI userspace firmware. This option may support guest operating systems that are not supported by the other loaders.

In order to make use of the UEFI support in bhyve, first obtain the UEFI firmware images. This can be done by installing sysutils/bhyve-firmware port or package.

With the firmware in place, add the flags -l bootrom,/path/to/firmware to your bhyve command line. The actual bhyve command may look like this:

# bhyve -AHP -s 0:0,hostbridge -s 1:0,lpc \

-s 2:0,virtio-net,tap1 -s 3:0,virtio-blk,./disk.img \

-s 4:0,ahci-cd,./install.iso -c 4 -m 1024M \

-l bootrom,/usr/local/share/uefi-firmware/BHYVE_UEFI.fd \

guestsysutils/bhyve-firmware also contains a CSM-enabled firmware, to boot guests with no UEFI support in legacy BIOS mode:

# bhyve -AHP -s 0:0,hostbridge -s 1:0,lpc \

-s 2:0,virtio-net,tap1 -s 3:0,virtio-blk,./disk.img \

-s 4:0,ahci-cd,./install.iso -c 4 -m 1024M \

-l bootrom,/usr/local/share/uefi-firmware/BHYVE_UEFI_CSM.fd \

guest24.6.5. Graphical UEFI Framebuffer for bhyve Guests

The UEFI firmware support is particularly useful with predominantly graphical guest operating systems such as Microsoft Windows®.

Support for the UEFI-GOP framebuffer may also be enabled with the -s 29,fbuf,tcp=0.0.0.0:5900 flags. The framebuffer resolution may be configured with w=800 and h=600, and bhyve can be instructed to wait for a VNC connection before booting the guest by adding wait. The framebuffer may be accessed from the host or over the network via the VNC protocol. Additionally, -s 30,xhci,tablet can be added to achieve precise mouse cursor synchronization with the host.

The resulting bhyve command would look like this:

# bhyve -AHP -s 0:0,hostbridge -s 31:0,lpc \

-s 2:0,virtio-net,tap1 -s 3:0,virtio-blk,./disk.img \

-s 4:0,ahci-cd,./install.iso -c 4 -m 1024M \

-s 29,fbuf,tcp=0.0.0.0:5900,w=800,h=600,wait \

-s 30,xhci,tablet \

-l bootrom,/usr/local/share/uefi-firmware/BHYVE_UEFI.fd \

guestNote, in BIOS emulation mode, the framebuffer will cease receiving updates once control is passed from firmware to guest operating system.

24.6.6. Using ZFS with bhyve Guests

If ZFS is available on the host machine, using ZFS volumes instead of disk image files can provide significant performance benefits for the guest VMs. A ZFS volume can be created by:

# zfs create -V16G -o volmode=dev zroot/linuxdisk0When starting the VM, specify the ZFS volume as the disk drive:

# bhyve -A -H -P -s 0:0,hostbridge -s 1:0,lpc -s 2:0,virtio-net,tap0 -s3:0,virtio-blk,/dev/zvol/zroot/linuxdisk0 \

-l com1,stdio -c 4 -m 1024M linuxguest24.6.7. Virtual Machine Consoles

It is advantageous to wrap the bhyve console in a session management tool such as sysutils/tmux or sysutils/screen in order to detach and reattach to the console. It is also possible to have the console of bhyve be a null modem device that can be accessed with cu. To do this, load the nmdm kernel module and replace -l com1,stdio with -l com1,/dev/nmdm0A. The /dev/nmdm devices are created automatically as needed, where each is a pair, corresponding to the two ends of the null modem cable (/dev/nmdm0A and /dev/nmdm0B). See nmdm(4) for more information.

# kldload nmdm

# bhyve -A -H -P -s 0:0,hostbridge -s 1:0,lpc -s 2:0,virtio-net,tap0 -s 3:0,virtio-blk,./linux.img \

-l com1,/dev/nmdm0A -c 4 -m 1024M linuxguest

# cu -l /dev/nmdm0B

Connected

Ubuntu 13.10 handbook ttyS0

handbook login:24.6.8. Managing Virtual Machines

A device node is created in /dev/vmm for each virtual machine. This allows the administrator to easily see a list of the running virtual machines:

# ls -al /dev/vmm

total 1

dr-xr-xr-x 2 root wheel 512 Mar 17 12:19 ./

dr-xr-xr-x 14 root wheel 512 Mar 17 06:38 ../

crw------- 1 root wheel 0x1a2 Mar 17 12:20 guestname

crw------- 1 root wheel 0x19f Mar 17 12:19 linuxguest

crw------- 1 root wheel 0x1a1 Mar 17 12:19 otherguestA specified virtual machine can be destroyed using bhyvectl:

# bhyvectl --destroy --vm=guestname24.6.9. Persistent Configuration

In order to configure the system to start bhyve guests at boot time, the following configurations must be made in the specified files:

-

/etc/sysctl.conf

net.link.tap.up_on_open=1

-

/etc/rc.conf

cloned_interfaces="bridge0 tap0" ifconfig_bridge0="addm igb0 addm tap0" kld_list="nmdm vmm"

24.7. FreeBSD as a Xen™-Host

Xen is a GPLv2-licensed type 1 hypervisor for Intel® and ARM® architectures. FreeBSD has included i386™ and AMD® 64-Bit DomU and Amazon EC2 unprivileged domain (virtual machine) support since FreeBSD 8.0 and includes Dom0 control domain (host) support in FreeBSD 11.0. Support for para-virtualized (PV) domains has been removed from FreeBSD 11 in favor of hardware virtualized (HVM) domains, which provides better performance.

Xen™ is a bare-metal hypervisor, which means that it is the first program loaded after the BIOS. A special privileged guest called the Domain-0 (Dom0 for short) is then started. The Dom0 uses its special privileges to directly access the underlying physical hardware, making it a high-performance solution. It is able to access the disk controllers and network adapters directly. The Xen™ management tools to manage and control the Xen™ hypervisor are also used by the Dom0 to create, list, and destroy VMs. Dom0 provides virtual disks and networking for unprivileged domains, often called DomU. Xen™ Dom0 can be compared to the service console of other hypervisor solutions, while the DomU is where individual guest VMs are run.

Xen™ can migrate VMs between different Xen™ servers. When the two xen hosts share the same underlying storage, the migration can be done without having to shut the VM down first. Instead, the migration is performed live while the DomU is running and there is no need to restart it or plan a downtime. This is useful in maintenance scenarios or upgrade windows to ensure that the services provided by the DomU are still provided. Many more features of Xen™ are listed on the Xen Wiki Overview page. Note that not all features are supported on FreeBSD yet.

24.7.1. Hardware Requirements for Xen™ Dom0

To run the Xen™ hypervisor on a host, certain hardware functionality is required. Hardware virtualized domains require Extended Page Table (EPT) and Input/Output Memory Management Unit (IOMMU) support in the host processor.

|

In order to run a FreeBSD Xen™ Dom0 the box must be booted using legacy boot (BIOS). |

24.7.2. Xen™ Dom0 Control Domain Setup

Users of FreeBSD 11 should install the emulators/xen-kernel47 and sysutils/xen-tools47 packages that are based on Xen version 4.7. Systems running on FreeBSD-12.0 or newer can use Xen 4.11 provided by emulators/xen-kernel411 and sysutils/xen-tools411, respectively.

Configuration files must be edited to prepare the host for the Dom0 integration after the Xen packages are installed. An entry to /etc/sysctl.conf disables the limit on how many pages of memory are allowed to be wired. Otherwise, DomU VMs with higher memory requirements will not run.

# echo 'vm.max_wired=-1' >> /etc/sysctl.confAnother memory-related setting involves changing /etc/login.conf, setting the memorylocked option to unlimited. Otherwise, creating DomU domains may fail with Cannot allocate memory errors. After making the change to /etc/login.conf, run cap_mkdb to update the capability database. See Resource Limits for details.

# sed -i '' -e 's/memorylocked=64K/memorylocked=unlimited/' /etc/login.conf

# cap_mkdb /etc/login.confAdd an entry for the Xen™ console to /etc/ttys:

# echo 'xc0 "/usr/libexec/getty Pc" xterm onifconsole secure' >> /etc/ttysSelecting a Xen™ kernel in /boot/loader.conf activates the Dom0. Xen™ also requires resources like CPU and memory from the host machine for itself and other DomU domains. How much CPU and memory depends on the individual requirements and hardware capabilities. In this example, 8 GB of memory and 4 virtual CPUs are made available for the Dom0. The serial console is also activated and logging options are defined.

The following command is used for Xen 4.7 packages:

# echo 'hw.pci.mcfg=0' >> /boot/loader.conf

# echo 'if_tap_load="YES"' >> /boot/loader.conf

# echo 'xen_kernel="/boot/xen"' >> /boot/loader.conf

# echo 'xen_cmdline="dom0_mem=8192M dom0_max_vcpus=4 dom0pvh=1 console=com1,vga com1=115200,8n1 guest_loglvl=all loglvl=all"' >> /boot/loader.confFor Xen versions 4.11 and higher, the following command should be used instead:

# echo 'if_tap_load="YES"' >> /boot/loader.conf

# echo 'xen_kernel="/boot/xen"' >> /boot/loader.conf

# echo 'xen_cmdline="dom0_mem=8192M dom0_max_vcpus=4 dom0=pvh console=com1,vga com1=115200,8n1 guest_loglvl=all loglvl=all"' >> /boot/loader.conf|

Log files that Xen™ creates for the DomU VMs are stored in /var/log/xen. Please be sure to check the contents of that directory if experiencing issues. |

Activate the xencommons service during system startup:

# sysrc xencommons_enable=yesThese settings are enough to start a Dom0-enabled system. However, it lacks network functionality for the DomU machines. To fix that, define a bridged interface with the main NIC of the system which the DomU VMs can use to connect to the network. Replace em0 with the host network interface name.

# sysrc cloned_interfaces="bridge0"

# sysrc ifconfig_bridge0="addm em0 SYNCDHCP"

# sysrc ifconfig_em0="up"Restart the host to load the Xen™ kernel and start the Dom0.

# rebootAfter successfully booting the Xen™ kernel and logging into the system again, the Xen™ management tool xl is used to show information about the domains.

# xl list

Name ID Mem VCPUs State Time(s)

Domain-0 0 8192 4 r----- 962.0The output confirms that the Dom0 (called Domain-0) has the ID 0 and is running. It also has the memory and virtual CPUs that were defined in /boot/loader.conf earlier. More information can be found in the Xen™ Documentation. DomU guest VMs can now be created.

24.7.3. Xen™ DomU Guest VM Configuration

Unprivileged domains consist of a configuration file and virtual or physical hard disks. Virtual disk storage for the DomU can be files created by truncate(1) or ZFS volumes as described in “Creating and Destroying Volumes”. In this example, a 20 GB volume is used. A VM is created with the ZFS volume, a FreeBSD ISO image, 1 GB of RAM and two virtual CPUs. The ISO installation file is retrieved with fetch(1) and saved locally in a file called freebsd.iso.

# fetch https://download.freebsd.org/releases/ISO-IMAGES/13.1/FreeBSD-13.1-RELEASE-amd64-bootonly.iso -o freebsd.isoA ZFS volume of 20 GB called xendisk0 is created to serve as the disk space for the VM.

# zfs create -V20G -o volmode=dev zroot/xendisk0The new DomU guest VM is defined in a file. Some specific definitions like name, keymap, and VNC connection details are also defined. The following freebsd.cfg contains a minimum DomU configuration for this example:

# cat freebsd.cfg

builder = "hvm" (1)

name = "freebsd" (2)

memory = 1024 (3)

vcpus = 2 (4)

vif = [ 'mac=00:16:3E:74:34:32,bridge=bridge0' ] (5)

disk = [

'/dev/zvol/tank/xendisk0,raw,hda,rw', (6)

'/root/freebsd.iso,raw,hdc:cdrom,r' (7)

]

vnc = 1 (8)

vnclisten = "0.0.0.0"

serial = "pty"

usbdevice = "tablet"These lines are explained in more detail:

| 1 | This defines what kind of virtualization to use. hvm refers to hardware-assisted virtualization or hardware virtual machine. Guest operating systems can run unmodified on CPUs with virtualization extensions, providing nearly the same performance as running on physical hardware. generic is the default value and creates a PV domain. <.> Name of this virtual machine to distinguish it from others running on the same Dom0. Required. <.> Quantity of RAM in megabytes to make available to the VM. This amount is subtracted from the hypervisor’s total available memory, not the memory of the Dom0. <.> Number of virtual CPUs available to the guest VM. For best performance, do not create guests with more virtual CPUs than the number of physical CPUs on the host. <.> Virtual network adapter. This is the bridge connected to the network interface of the host. The mac parameter is the MAC address set on the virtual network interface. This parameter is optional, if no MAC is provided Xen™ will generate a random one. <.> Full path to the disk, file, or ZFS volume of the disk storage for this VM. Options and multiple disk definitions are separated by commas. <.> Defines the Boot medium from which the initial operating system is installed. In this example, it is the ISO image downloaded earlier. Consult the Xen™ documentation for other kinds of devices and options to set. <.> Options controlling VNC connectivity to the serial console of the DomU. In order, these are: active VNC support, define IP address on which to listen, device node for the serial console, and the input method for precise positioning of the mouse and other input methods. keymap defines which keymap to use, and is english by default. |

After the file has been created with all the necessary options, the DomU is created by passing it to xl create as a parameter.

# xl create freebsd.cfg|

Each time the Dom0 is restarted, the configuration file must be passed to |

The output of xl list confirms that the DomU has been created.

# xl list

Name ID Mem VCPUs State Time(s)

Domain-0 0 8192 4 r----- 1653.4

freebsd 1 1024 1 -b---- 663.9To begin the installation of the base operating system, start the VNC client, directing it to the main network address of the host or to the IP address defined on the vnclisten line of freebsd.cfg. After the operating system has been installed, shut down the DomU and disconnect the VNC viewer. Edit freebsd.cfg, removing the line with the cdrom definition or commenting it out by inserting a # character at the beginning of the line. To load this new configuration, it is necessary to remove the old DomU with xl destroy, passing either the name or the id as the parameter. Afterwards, recreate it using the modified freebsd.cfg.

# xl destroy freebsd

# xl create freebsd.cfgThe machine can then be accessed again using the VNC viewer. This time, it will boot from the virtual disk where the operating system has been installed and can be used as a virtual machine.

24.7.4. Troubleshooting

This section contains basic information in order to help troubleshoot issues found when using FreeBSD as a Xen™ host or guest.

24.7.4.1. Host Boot Troubleshooting

Please note that the following troubleshooting tips are intended for Xen™ 4.11 or newer. If you are still using Xen™ 4.7 and having issues consider migrating to a newer version of Xen™.

In order to troubleshoot host boot issues you will likely need a serial cable, or a debug USB cable. Verbose Xen™ boot output can be obtained by adding options to the xen_cmdline option found in loader.conf. A couple of relevant debug options are:

-

iommu=debug: can be used to print additional diagnostic information about the iommu. -

dom0=verbose: can be used to print additional diagnostic information about the dom0 build process. -

sync_console: flag to force synchronous console output. Useful for debugging to avoid losing messages due to rate limiting. Never use this option in production environments since it can allow malicious guests to perform DoS attacks against Xen™ using the console.

FreeBSD should also be booted in verbose mode in order to identify any issues. To activate verbose booting, run this command:

# echo 'boot_verbose="YES"' >> /boot/loader.confIf none of these options help solving the problem, please send the serial boot log to [email protected] and [email protected] for further analysis.

24.7.4.2. Guest Creation Troubleshooting

Issues can also arise when creating guests, the following attempts to provide some help for those trying to diagnose guest creation issues.

The most common cause of guest creation failures is the xl command spitting some error and exiting with a return code different than 0. If the error provided is not enough to help identify the issue, more verbose output can also be obtained from xl by using the v option repeatedly.

# xl -vvv create freebsd.cfg

Parsing config from freebsd.cfg

libxl: debug: libxl_create.c:1693:do_domain_create: Domain 0:ao 0x800d750a0: create: how=0x0 callback=0x0 poller=0x800d6f0f0

libxl: debug: libxl_device.c:397:libxl__device_disk_set_backend: Disk vdev=xvda spec.backend=unknown

libxl: debug: libxl_device.c:432:libxl__device_disk_set_backend: Disk vdev=xvda, using backend phy

libxl: debug: libxl_create.c:1018:initiate_domain_create: Domain 1:running bootloader

libxl: debug: libxl_bootloader.c:328:libxl__bootloader_run: Domain 1:not a PV/PVH domain, skipping bootloader

libxl: debug: libxl_event.c:689:libxl__ev_xswatch_deregister: watch w=0x800d96b98: deregister unregistered

domainbuilder: detail: xc_dom_allocate: cmdline="", features=""

domainbuilder: detail: xc_dom_kernel_file: filename="/usr/local/lib/xen/boot/hvmloader"

domainbuilder: detail: xc_dom_malloc_filemap : 326 kB

libxl: debug: libxl_dom.c:988:libxl__load_hvm_firmware_module: Loading BIOS: /usr/local/share/seabios/bios.bin

...If the verbose output does not help diagnose the issue there are also QEMU and Xen™ toolstack logs in /var/log/xen. Note that the name of the domain is appended to the log name, so if the domain is named freebsd you should find a /var/log/xen/xl-freebsd.log and likely a /var/log/xen/qemu-dm-freebsd.log. Both log files can contain useful information for debugging. If none of this helps solve the issue, please send the description of the issue you are facing and as much information as possible to [email protected] and [email protected] in order to get help.

上次修改时间: September 18, 2024 by fiercex